Previously I talked about how we created an interface to allow users to upload files to a SharePoint site which would then be replicated using Logic Apps to our Azure Data Lake which kicks off our data processing in Synapse.

We also had the opposite use case where we wanted to produce a data set which would be the output from our data processing which could then be made accessible to users in a simple way such as uploading the file to a SharePoint document library.

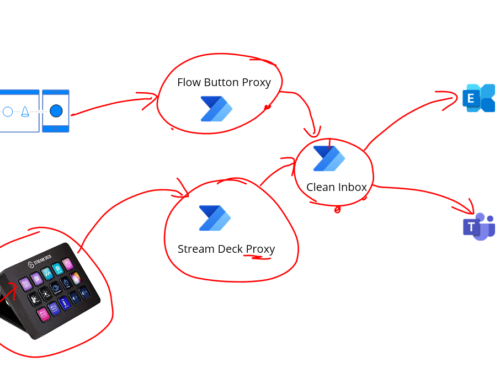

The below diagram shows what this looks like:

Design Considerations

- We wanted to allow a Synapse pipeline to be able to put a file into SharePoint. There is no out of the box connector for SharePoint so we could use a Logic App as a proxy to allow the pipeline to pass a message to the Logic App which then uses a Logic App connector to add the file to SharePoint

- We could either 1) Let synapse pass the message body to the Logic App or 2) Let the synapse pipeline pass a path to the file in data lake which the logic app would then read. We chose option 1 but both are equally viable

- The synapse pipeline would pass some headers to the Logic App telling it where to put the file, file path and file name

Implementation

The implementation of the Logic App is very straight forward. We use a request input action so the Synapse pipeline can make an HTTP call to trigger the Logic App as shown below.

The SharePoint connector configuration is pretty simple, we just pass the variables which take their values from the headers of the input HTTP request to the logic app. This sets the site, folder and file name. The body is then just the body of the HTTP request to the Logic App.

In Synapse we then use the Web Activity in the pipeline to make a call to the Logic App and pass the appropriate headers and the input file content that has been read.

In summary this worked out to be a simple way to allow our Synapse Pipelines to push files to SharePoint to allow users to work with some simple datasets which are output from our data processing.